The Argument for Generalists in Web Development

“An expert is one who knows more and more about less and less until he knows absolutely everything about nothing” -@mastersje

My grandfather was a carpenter. He had an immense workbench, with a pegboard that had hundreds of hammers, screwdrivers, wrenches, saws, drills, planes, awls. They were powered, hand powered, circular, rotary and reciprocating. Each of them had their own beauty, and their own use. The man could build a picture frame, a desk, a dock, or an entire house. He may not have wielded any of them expertly, but he knew which one to use when the situation called, and he certainly knew how to use them in concert.

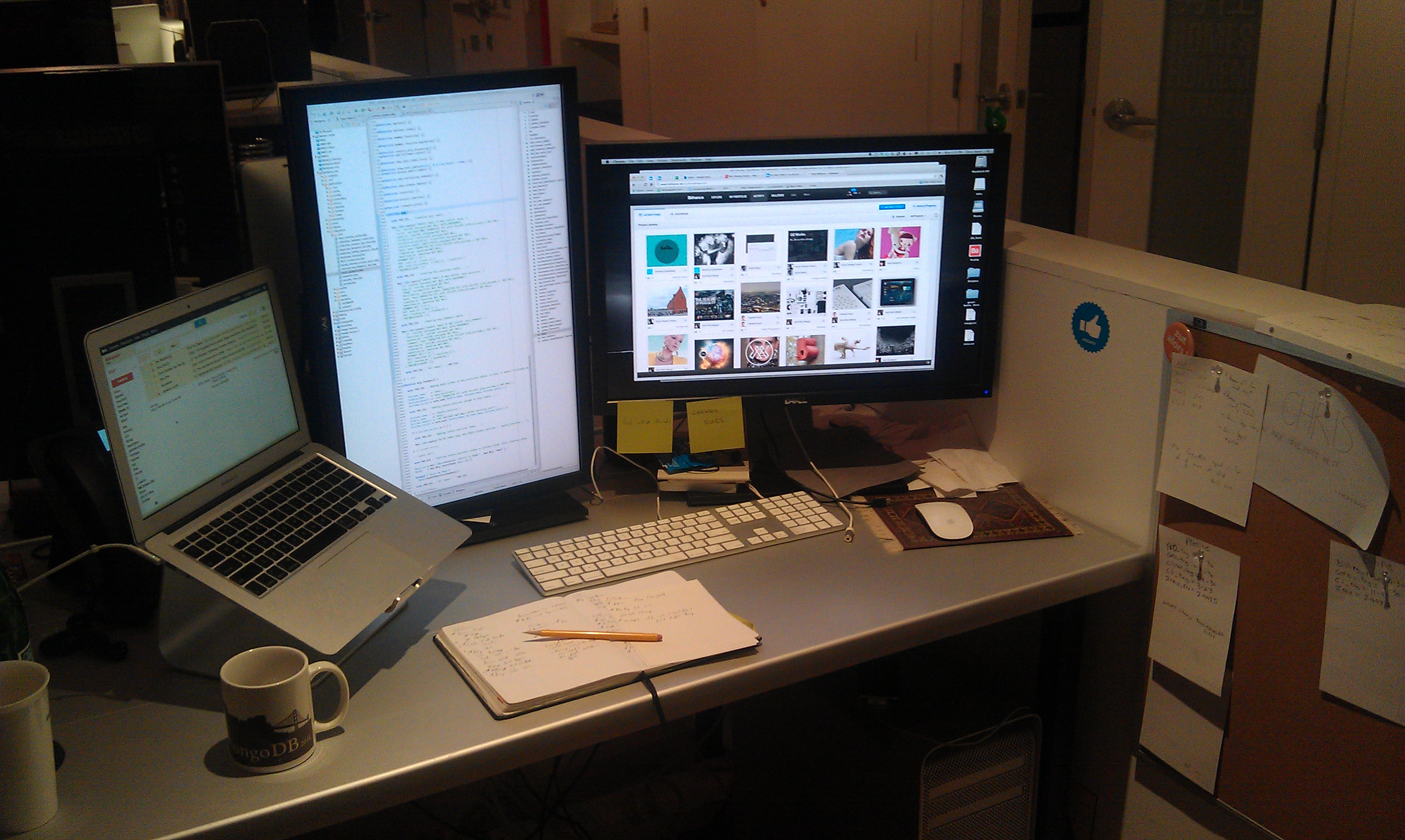

Two generations later, I have become a web developer, and can’t help but notice the similarities between the two. He worked in wood, but I work in bits and bytes. Web sites, especially big ones, are not the product of a single technology, but the result of many technologies seamlessly interacting. Building a house is no different. Framing, roofing, laying foundation are all separate skills that require vastly different tools. Part of the challenge of being a web developer is being able to manage lots of technologies at once.

Great web developers are carpenters. Just like carpentry, there is always the right tool for the job. Infrastructure that doesn’t play well with software is bound for failure. Software that doesn’t use or fit the hardware / kernel / OS well won’t run well. As experts in web development, we can know less about each technology, but should know more about how they work together. Tailoring software or combinations of software packages is the magic bullet that solves problems quickly and scalably.

In the context of early stage startups, generalists are a better bet for getting a production up and running. Even as a team grows, having generalists around means you can task a single developer with developing and entire feature. With a bit of support from specialists, they can pull off shipping a feature faster than a team of a couple of specialists. When widespread issues occur, I prefer to have a generalist in my corner, because they typically understand the the connective tissue of a website very well, and are willing to put in the time debugging from a variety of perspectives.

None of this is to say that specialists don’t have their place in web development. The web is an innovative medium that it has spawned dozens of technologies (node.js) that require a deep pool of expertise to work in. There are vast arrays of techniques and frameworks that cater to working in a single technology. Generalists typically won’t have the depth necessary to pull off a awe-inspiring, truly nasty implementation. However, they’ll have the right instincts to pair it, deploy, and make it do something meaningful that a specialist might not be able to do by themselves.